Part 1 - Creating a Neural Network Based MAUI Mobile App with a Neural Network Designed and Trained in Google Colab

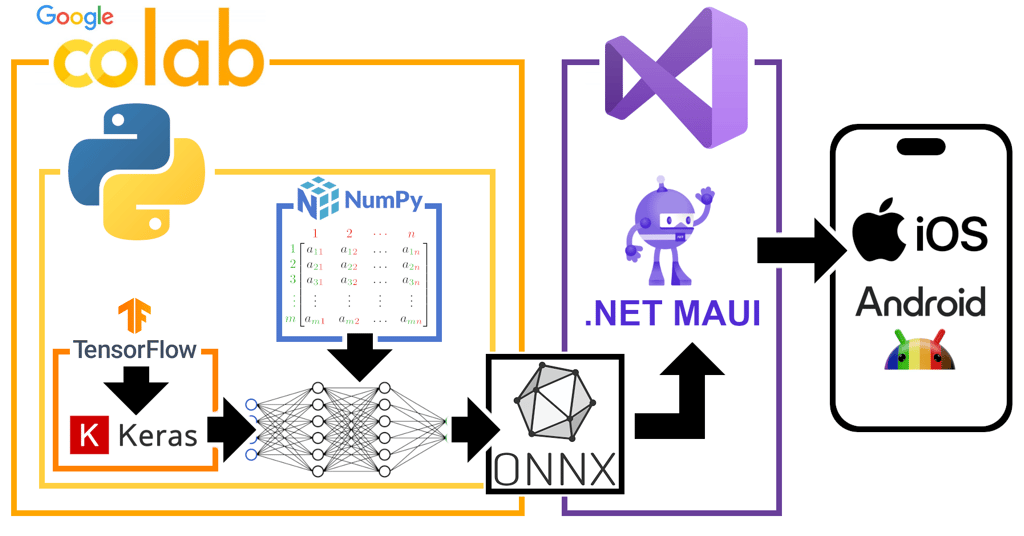

Having done a masters degree in Artificial Intelligence and then switch my attention to writing cross-platform mobile apps, I want to drag it back to AI, while continuing to work on .NET MAUI. So I'm doing some experimental work creating and training neural networks in Python/Keras on Google Colab and then exporting them into .onnx files so they can be deployed into the .NET MAUI apps. Seeing what's possible before deciding what interesting NN based apps I might want to make.

MAUI DEV STORIES

Stephen Moreton-Howell

4/23/20266 min read

The normal language used to design neural networks is Python, and a well established library is Keras. That's what I'm using here. I'm developing my neural networks in Google Colab. It's easy and free to get started with Google Colab notebooks. A main reason to use python is that one of the defining characteristics of neural networks is the processing of large multi-dimensional grids of numbers (tensors) and python, together with its associated standard libraries like numpy, is very good for doing that efficiently in very little code.

# Save the model

converter = tf.lite.TFLiteConverter.from_keras_model(model)

tflite_model = converter.convert()

with open('model.tflite', 'wb') as f:

f.write(tflite_model)

from google.colab import files

files.download('model.tflite')

# Save the model as ONNX

!pip install tf2onnx

# Convert to ONNX

!python -m tf2onnx.convert --keras saved_model.keras --output my_model.onnx

# Download

from google.colab import files

files.download('my_model.onnx')

The Tools for the Job

So I'll start by stepping through a simple example of creating and training a neural network using these tools.

First import the necessary libraries:

import tensorflow as tf

from tensorflow import keras

import numpy as np

Next, create an array of training data. By convention this is called X_train. In this initial test I'm simply trying to train an AI to look at a list of 10 numbers and decide whether their sum falls into one of three categories: low, medium or high. So to train the NN how to spot this I generate a whole load of lists of 10 numbers and tell the NN which category each falls into. In that way (hopefully) it learns the pattern and can correctly classify future lists of 10 numbers that weren't in the training set.

Whether this actually works very well is not the point. The point is to create an initial NN and experiment with exporting it to MAUI.

So, here's that training data. We use the NumPy library to create a 2D array. The model (the NN) will learn to classify each of the rows here:

X_train = np.array([

[1, 2, 3, 4, 5, 6, 7, 8, 9, 10], # Sum = 55 (Medium)

[0, 1, 0, 1, 0, 1, 0, 1, 0, 1], # Sum = 5 (Low)

[10, 10, 10, 10, 10, 10, 10, 10, 10, 10], # Sum = 100 (High)

[2, 2, 2, 2, 2, 2, 2, 2, 2, 2], # Sum = 20 (Low)

[5, 5, 5, 5, 5, 5, 5, 5, 5, 5], # Sum = 50 (Medium)

[8, 9, 8, 9, 8, 9, 8, 9, 8, 9], # Sum = 85 (High)

[1, 1, 1, 1, 1, 1, 1, 1, 1, 1], # Sum = 10 (Low)

[5, 5, 5, 5, 5, 5, 5, 5, 5, 5], # Sum = 45 (Medium)

[9, 9, 9, 9, 9, 9, 9, 9, 9, 9], # Sum = 90 (High)

[3, 3, 3, 3, 3, 3, 3, 3, 3, 3], # Sum = 30 (Medium)

[1, 2, 3, 4, 5, 6, 7, 8, 9, 10], # Sum = 55 (Medium)

[0, 1, 0, 1, 0, 1, 0, 1, 0, 1], # Sum = 5 (Low)

[5, 5, 5, 5, 5, 5, 5, 5, 5, 5], # Sum = 50 (Medium)

[2, 2, 2, 2, 2, 2, 2, 2, 2, 2], # Sum = 20 (Low)

[5, 5, 5, 5, 5, 5, 5, 5, 5, 5], # Sum = 50 (Medium)

[8, 9, 8, 9, 8, 9, 8, 9, 8, 9], # Sum = 85 (High)

[1, 1, 1, 1, 1, 1, 1, 1, 1, 1], # Sum = 10 (Low)

[5, 5, 5, 5, 5, 5, 5, 5, 5, 5], # Sum = 45 (Medium)

[9, 9, 9, 9, 9, 9, 9, 9, 9, 9], # Sum = 90 (High)

[3, 3, 3, 3, 3, 3, 3, 3, 3, 3], # Sum = 30 (Medium)

], dtype=np.float32)

Next, the classifications. The y_train variable is a 1D array where each element is the correct classification for the corresponding row in X_train. The model will use this to adjust its weights and hopefully, after training on all this data, it ends up able to correctly classify sets of 10 numbers that it hasn't yet seen.

# Training labels - corresponding classifications

# 0 = Low (sum < 30)

# 1 = Medium (30 <= sum < 70)

# 2 = High (sum >= 70)

y_train = np.array([1, 0, 2, 0, 1, 2, 0, 1, 2, 1, 1, 0, 1, 0, 1, 2, 0, 1, 2, 1], dtype=np.int32)

print("Training Data Shape:", X_train.shape)

print("Training Labels Shape:", y_train.shape)

print("\nFirst training example:")

print("Input:", X_train[0])

print("Label:", y_train[0], "(Medium)")

Next, create the neural network. This is where we use the Keras library.

# Create the neural network

model = keras.Sequential([

keras.layers.Dense(128, activation='relu', input_shape=(10,)),

keras.layers.Dropout(0.2),

keras.layers.Dense(64, activation='relu'),

keras.layers.Dense(3, activation='softmax') # 3 output classes

])

Next, compile it:

# Compile the model

model.compile(

optimizer='adam',

loss='sparse_categorical_crossentropy',

metrics=['accuracy']

)

Now we can train it using the data we created earlier:

# Train the model

history = model.fit(X_train, y_train, epochs=100, verbose=1)

Then we can test it on an as-yet unseen set of 10 numbers:

# Test the model

test_input = np.array([[5, 5, 5, 5, 5, 5, 5, 5, 5, 5]], dtype=np.float32) # Sum = 10 (should be Low)

prediction = model.predict(test_input)

predicted_class = np.argmax(prediction)

print("\nTest prediction:")

print("Input:", test_input[0])

print("Predictions:", prediction[0])

print("Predicted class:", predicted_class, "(0=Low, 1=Medium, 2=High)")

OK. We've created, trained and tested a model. It's not very good, but that's fine for now. We'll try saving it as a .tflite file and as a .onnx file. Then I'll be creating a .NET MAUI app and trying to load the .onnx file and play with it.

Saving the Trained Model

The .NET MAUI App

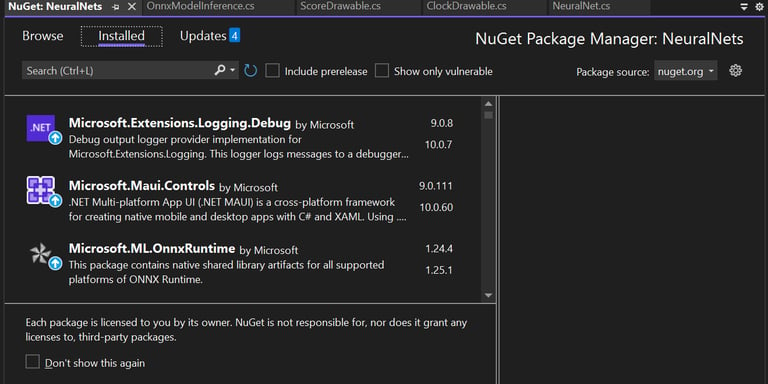

Now we can open Microsoft Visual Studio and create an empty .NET MAUI app. Then right-click on the project and choose "Manage NuGet Packages...". Install Microsoft.ML.OnnxRuntime.

To test the model I just wanted to put it somewhere in the .NET MAUI app so that I could run it on a test input and step through the code looking at its prediction. So, in MainPage.xaml.cs, in the MainPage class, I added an instance of an OnnxModelInference:

public partial class MainPage : ContentPage

{

private OnnxModelInference _model;

Then, in the MainPage constructor, load the model from the ONNX file and attempt to use it to make a prediction:

try

{

model = new OnnxModelInference("my_model.onnx");

_model.PrintModelInfo();

DoAPredict();

}

catch (Exception ex)

{

DisplayAlert("Error", $"Failed to load model: {ex.Message}", "OK");

}

private void DoAPredict()

{

try

{

// Here are our 10 input features on which we'll make a prediction

float[] input = new float[] { 10.0f, 10.0f, 10.0f, 10.0f, 10.0f, 10.0f, 10.0f, 10.0f, 10.0f, 10.0f };

// Run the model

float[] output = _model.Predict(input);

string result = string.Join(", ", output.Select(x => x.ToString("F4")));

ResultLabel.Text = $"Predictions: [{result}]";

// Get the class with the highest probability

int predictedClass = Array.IndexOf(output, output.Max());

ResultLabel.Text += $"\nPredicted Class: {predictedClass}";

}

catch (Exception ex)

{

DisplayAlert("Error", $"Prediction failed: {ex.Message}", "OK");

}

}

We'll feed the model 10 numbers and it will tell us whether it thinks they're low, medium or high, based on its training. The output will be an array of 3 numbers representing the probability that the correct answer is low, medium or high. Since, in this example, all our numbers are 10 (maximum value) we'd expect the model to conclude that the highest probability is that they should be classified as high. So the 3rd number in the output array should have the highest value.

When I run the app and step through this code, sure enough I get these values for the output:

0.001092682, 0.3259344, 0.672973

So the model predicted correctly!

I've managed to create and train a very simple neural network and use ONNX to transfer it to a .NET MAUI app then run it in that app. So the principle has been demonstrated. In my next blog I'll move onto a more interesting neural network with a more genuinely useful application. But that's all for now.

The End

Getting Started - Creating a Demo Neural Network